Environmental considerations

How much electricity does AI use?

Electrcity consumption and AI

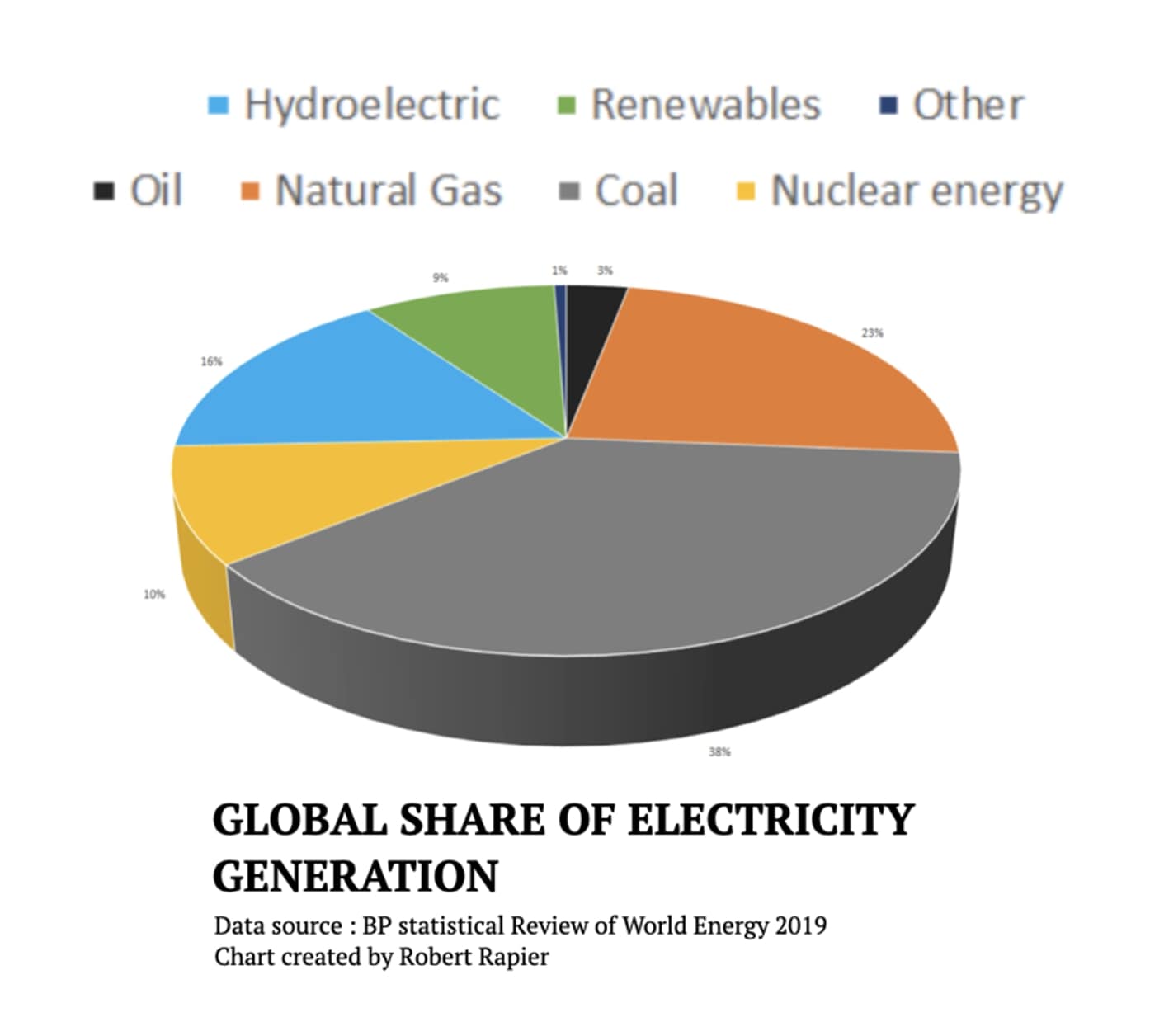

Large language models require vast amonuts of electricity to produce and operate – and approximately 2/3 of the world’s electrcity is generated by burning fossil fuels. Trade finance professional may thus experience ethical considerations of potentially exacerbating climate change as an interaction with AI systems.

AI systems are trained and hosted the biggest computer clusters in the world, i.e. datacentres.

The electrcity consumption by AI systems raises questions on their indirect and direct impact of electricity usage.

A Goldman Sachs report estimates that in 2024, data centres account for 1-2% of electricity usage worldwide, and project it to rise to 3-4% until 2030. The same report makes a precition that Europe will need to spend $1 Trillion by 2030 to prepare its power grid for AI. A hefty sum.

“Data centers—the engines powering everything from AI to traditional computing to cryptocurrency—currently account for about 2% of global electricity consumption. Of this, AI accounts for roughly 0.5%,”

While training neural networks is energy intensive, it currently pales in comparison to other sources of carbon emissions. The academic paper “The Carbon Footprint of Machine Learning Training Will Plateau, Then Shrink” by Patterson et al, 2022 estimates that the training of GPT-3 from OpenAI produced 552 metric tons of carbon dioxide, equivalent to the lifetime emissions of roughly 30 average American internal combustion cars. The training stage is the most energy intensive stage of state of the art AI systems.

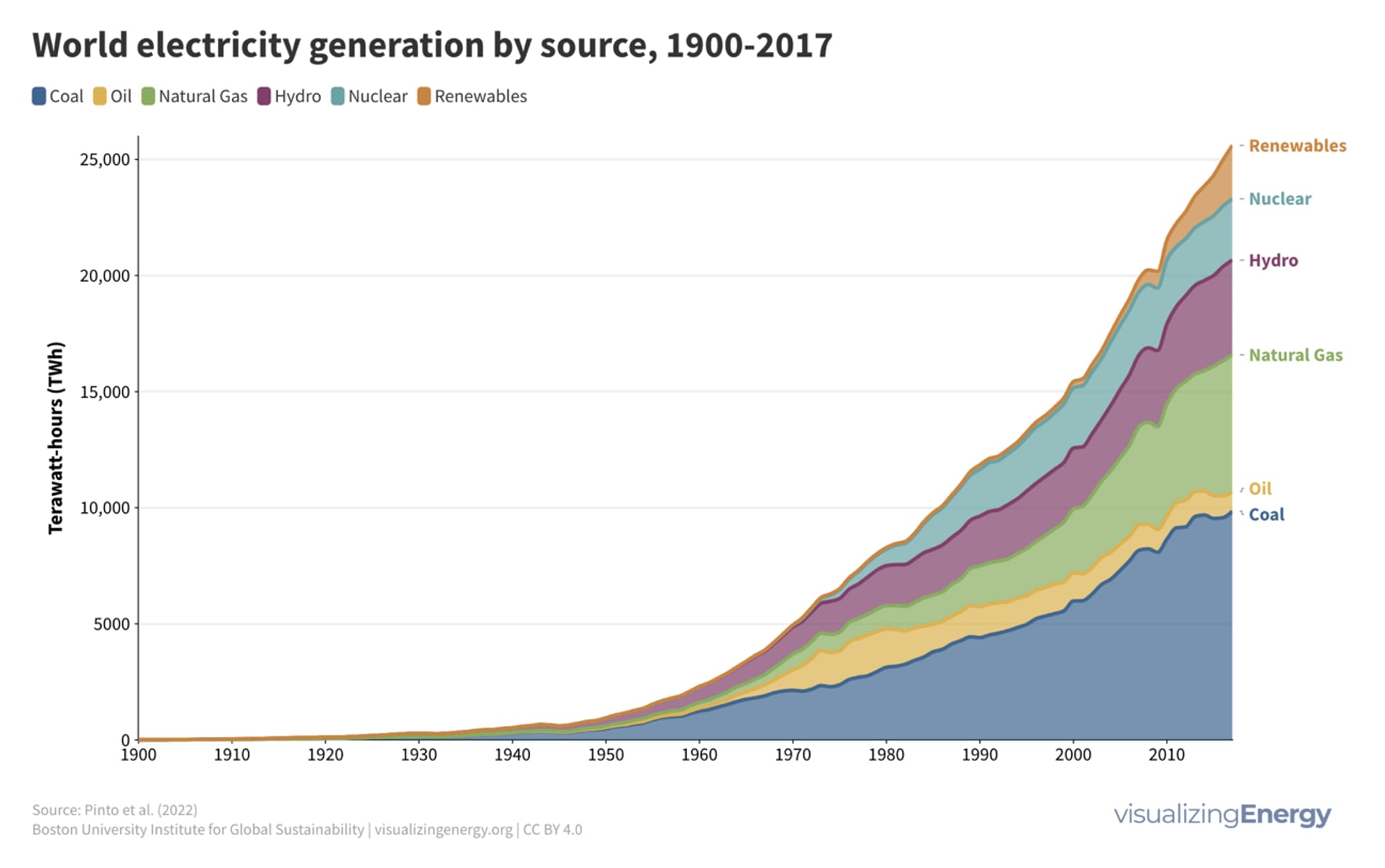

Electricity generation trends

A boarder context regarding electricity generation and consumption is presented.

The trend of total electricity production before AI.

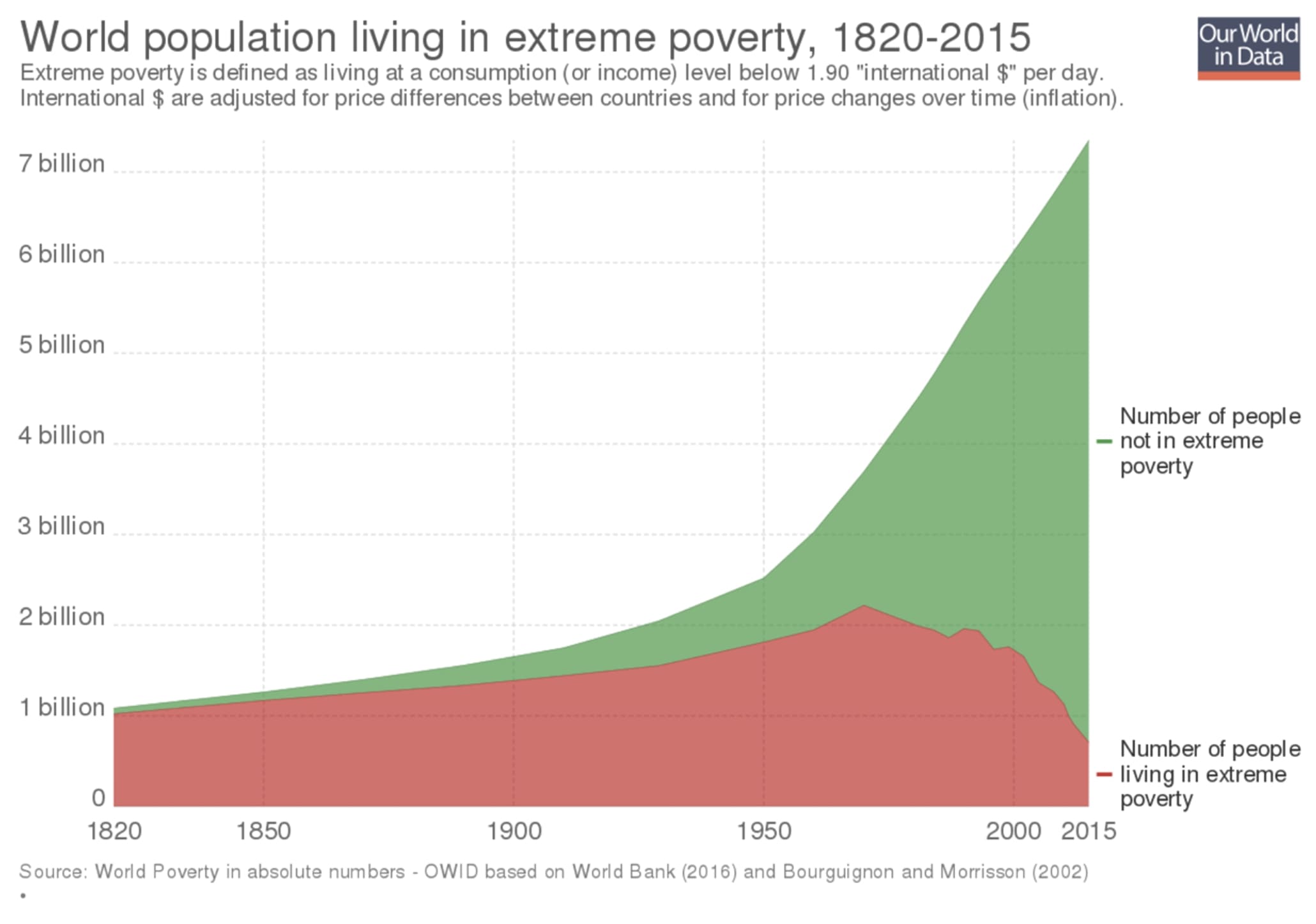

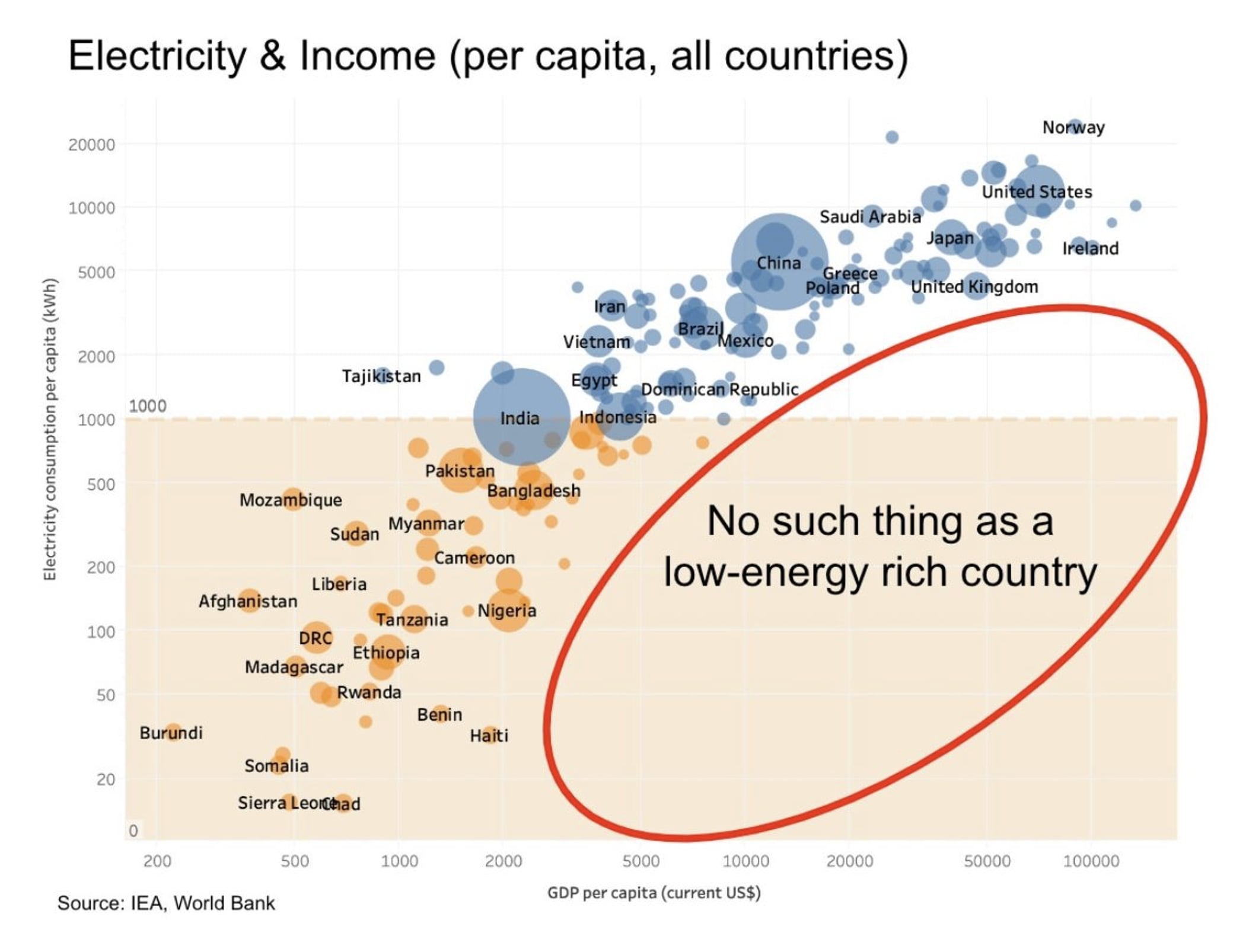

As countries become more developed, they tend to utilise more energy.

Is the clean energy problem unsolvable?

No.